The array Q is initialized with zeros and I always chose the best action, the action that will maximize my expected reward. The reward structure is purely based on the "Life" parameter. Horizontal distance from next pair of pipesįor each state, I have two possible actions.I discretized my space over the folowing parameters. Is a nice, concise description of Q Learning. That means that I didn't have to model the dynamics of Flappy Bird how it rises and falls, reacts to clicks and other things of that nature. I used Q Learning because it is a model free form of reinformcent learning. There are many variants to be used in different situations: Policy Iteration, Value Iteration, Q Learning, etc.

It then finds itself in a new state and gets a reward based on that.

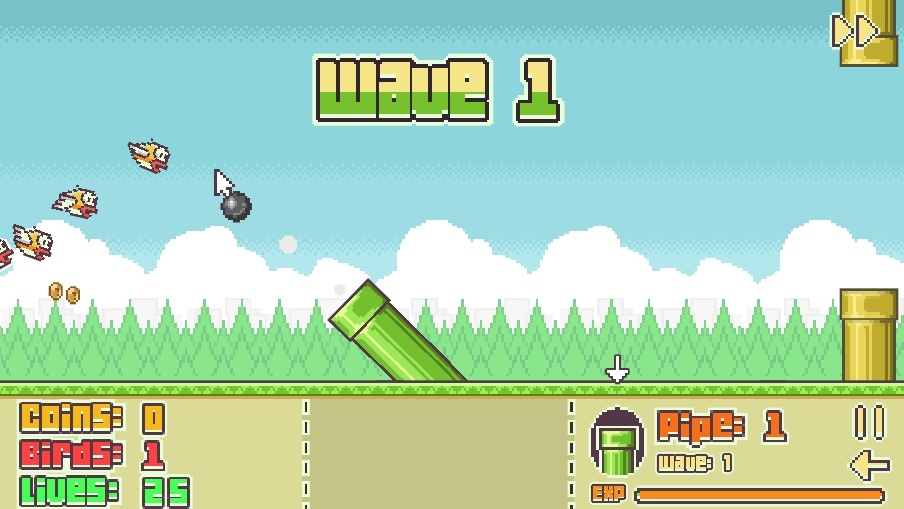

Here's the basic principle: the agent, Flappy Bird in this case, performs a certain action in a state. I ripped out the typing component and added some javascript Q Learning code to it. But it takes about 1 - 2 seconds to get a screenshot and that was definitely not fast or responsive enough. To get screenshots and send click commands. Initially, I wanted to create this hack for the Android app and I was planning to use The video above shows the results of a really well trained Flappy Bird that basically keeps dodging pipes forever. People have also created some interesting variants of the game -Īfter playing the game a few times (read few hours), I saw the opportunity to practice my machine learning skills and try and get Flappy Bird to learn how to play the game by itself. On Google Play or the App Store, it did not stop folks from creating very good replicas for the web. This is a hack for the popular game, Flappy Bird.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed